Create and share Worksheets

Worksheets provide a flexible way to filter, model and explore your data. Since modifications in a Worksheet do not change the underlying dataset definitions, you can explore your data freely.

Worksheets are composed of one or more Queries. Each Query in a Worksheet can be independently constructed. Each Query can have any number of Inputs and associated OPAL.

Worksheet tutorial

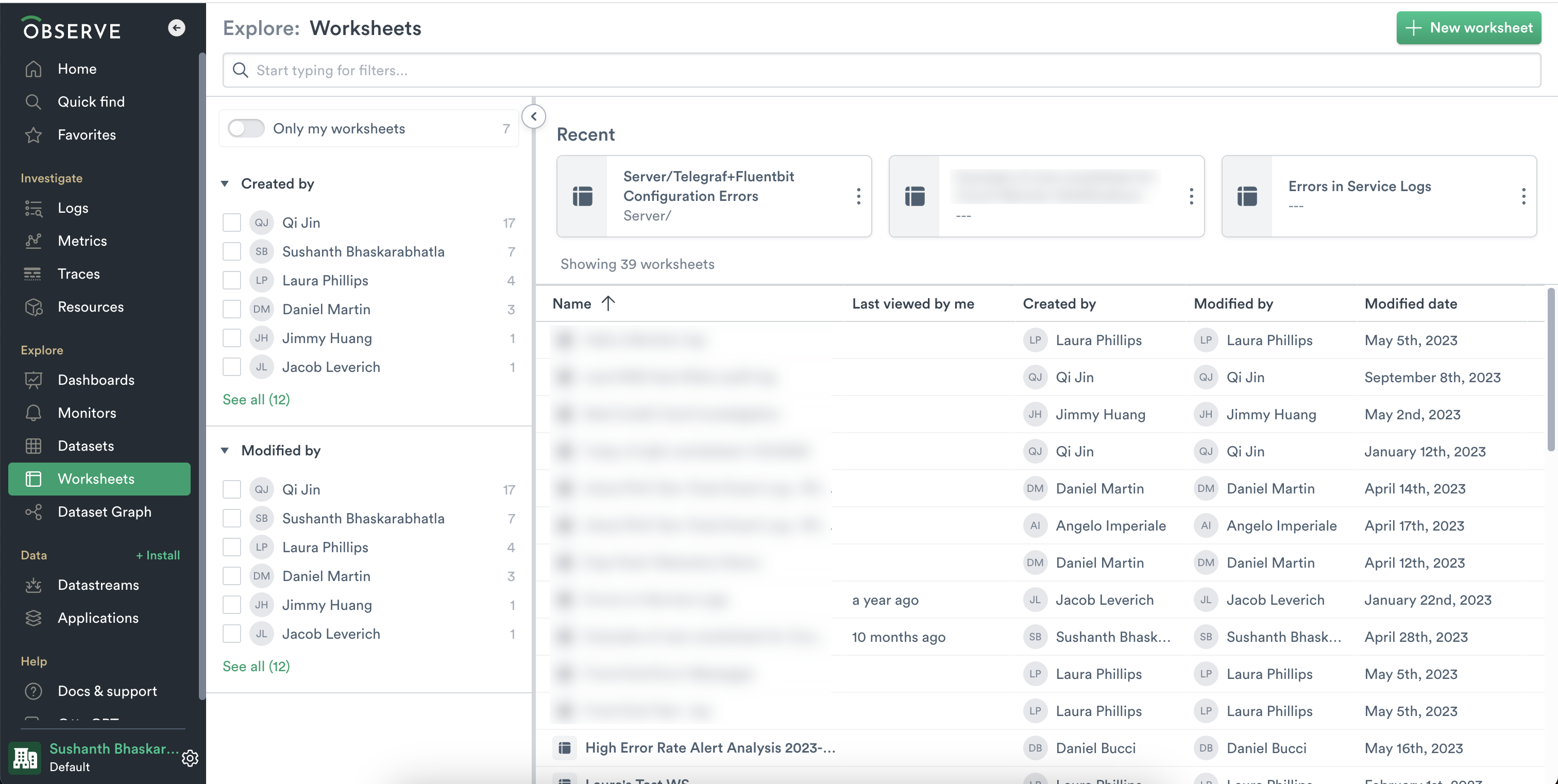

From the left navigation, click on Worksheets to view all the Worksheets in your Observe instance.

Now to get started with this tutorial, create a New Worksheet using the button on the top right.

This tutorial uses data from a Kubernetes Container Logs dataset. Add Container Logs as an input to your first query.

Search your data

Once you've gotten this data into your Worksheet, the simplest way to start searching or filtering is to use the filter bar at the top of the Worksheet. You can always toggle to the OPAL tab to see what OPAL was generated by the filter bar.

Filter your data

Clicking on the Down arrow at the top of a column opens up the column context menu and displays options for working with data in a single column. The type of options depends on the data type in the column.

To filter down to only errors for this Container, select Filter from the stream column menu and choose stderr.

Note that the corresponding filter appears in the filter bar within Expression Builder.

stream = "stderr"Visualize your data

You can easily visualize your data by selecting the Chart option within the expression builder. Try selecting Chart.

Below, we see a sharp spike in errors over the past hour.

In order to zoom out and see all errors for all services, let's remove the filter for payment-service and group the chart by Container. We can see that payment-service really is erroring at a high rate. We've found something interesting here and now we can share our worksheet with our team to continue the investigation.

That's the end of this exercise. To learn more about the types of visualizations supported in Observe, see Visualization types.

Share a Worksheet in Observe

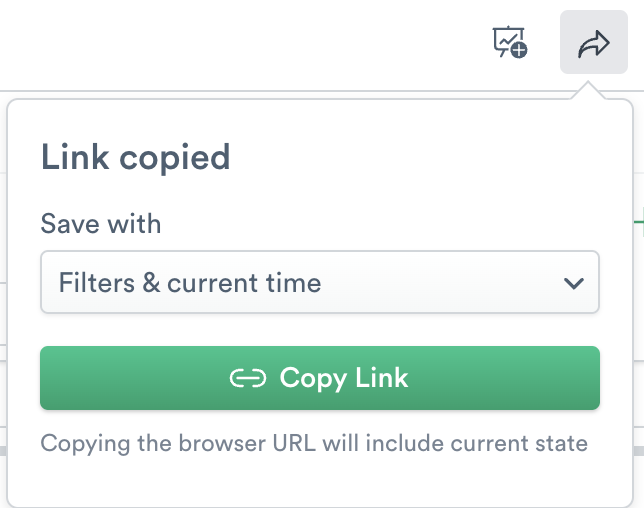

Often, when investigating in incident or simply exploring your data, you'll want to share your findings with someone else. To do this, use the Share icon next to Favorites. It's important to use the share button instead of simply copying the browser URL to ensure you capture the correct state (filters and time) that you intend to share.

Select from one of three Save options to share your object with correct state:

- No filters and time - defaults to the saved version of the Worksheet.

- Filter and relative time - displays current filters with data in the relative timeframe in the Select Time Frame menu.

- Filters and current time - displays the latest version based on the current time.

Click Copy Link to copy the link to your clipboard and then provide the link to the Observe member.

More options in the column context menu

Earlier, we used the column context menu to add a filter to our data. The column context menu contains a variety of options beyond just filtering:

- Filter - filter data by a single field.

- Remove empty cells - remove empty cells from the column.

- Create as visualization - create a visual representation of data in a column.

- Extract from string - extract parameters depending on the type of string.

- Sort A -> Z (descending order)

- Sort Z -> A (ascending order)

- Hide column - hide the column from view.

- Convert -

- int - integer

- float - floating-point type

- time - timestamp, date, time, interval

- JSON - JavaScript Object Notation type

- Add parameters

- Existing - add existing parameters to the column data.

- Create new - create a new parameter.

Some columns allow you to extract JSON from the data. Use this to create additional columns of data. In this worksheet, selecting Extract from JSON on the parameter column displays a list of fields that you can select and create columns. You can click Preview to see what your extraction displays as columns. Click Cancel to exit the Extract from JSON option.

Inspect raw data for Worksheet inputs

Sometimes, you might want to open up an input to one of your Worksheet to inspect the raw data. You can inspect your inputs via the Manage Inputs button under the OPAL tab.

From this view of your inputs, you can add additional inputs, or you can open any given input in a new tab to inspect the raw data for that input.

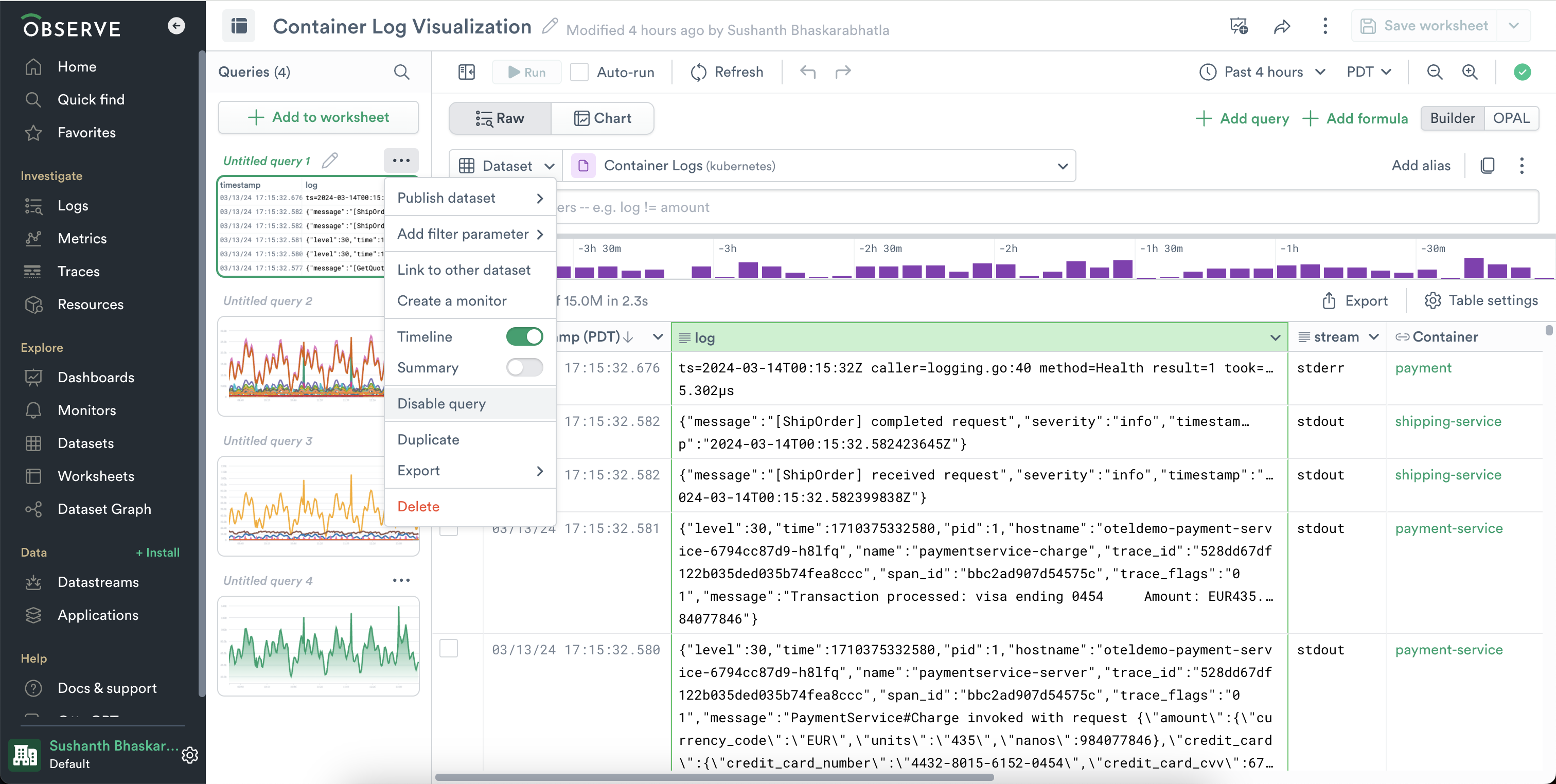

Disable queries

If you have a long running or expensive, you may occasionally want to disable it from running, but still be able to modify the OPAL while it's in a working state. You can disable a query via the "..." menu on each Query in the left hand rail.

This per-query menu offers other options as well:

- Publish dataset - publish the output of this query as a new dataset.

- Add filter parameter - add filter parameter to this query.

- Link to dataset - Connect this dataset to an existing dataset which contains a primary key by linking to it.

- Create a monitor - create a monitor based on the output of this query.

- Timeline - show or hide the timeline view, per query.

- Summary - show or hide a summary, per query.

- Disable - Disable this query to prevent it from running.

- Duplicate - duplicate this query within the same worksheet.

- Export - export this query as a csv or JSON.

- Delete - delete this query.

Pivot to resources

Using the context menu from a cell containing a resource, you will see the resource listed in blue. Click that line for options, including "Open in Resource Explorer." This option allows you to keep context as established by your Worksheet, such as time or parameters, so that you can more usefully search for relevant data.

Create new objects from Worksheets

The Worksheet is a useful tool for exploring data. Those explorations can lead to creating new useful objects, such as dashboard cards, monitors, Resource Datasets, and other types of Datasets.

Create a dashboard card from a worksheet

A Worksheet stage can be added to a dashboard by selecting the stage, then clicking the Add worksheet to dashboard button at top right. You can select any existing dashboard, or create a new one.

Create a monitor from a worksheet

A Worksheet stage can be used to create a new Monitor by selecting the stage, then opening the stage's context menu and selecting Create a Monitor. Count, Text Value, or Promotion monitor types can be created through this path.

Create a Dataset from a worksheet

A Worksheet stage can be used to create a new Dataset by selecting the stage, then opening the stage's context menu and selecting Publish Dataset. The type of Dataset is automatically set by the OPAL that has been written. For instance, starting with a Worksheet of log data with some filtering will produce an Event dataset. To produce a Resource dataset, use make_resource before clicking Publish Dataset.

NoteA Worksheet stage that uses parameters cannot be used to create a dataset at this time. Remove the parameters to create a dataset from this path.

Export data

To download the data displayed in a Worksheet, click the Export button on the right. Note that Export may not be immediately available on some Worksheet queries that do not produce a table of data; you may need to set the visualization type to Table and click Run. When exporting, you may select CSV or JSON format, and a maximum size limit (one thousand, ten thousand, or one hundred thousand rows). Note that hidden fields will be included. Use the pick_col OPAL verb to reduce the width of downloaded data.

Updated 5 months ago